), resize it to 5×7, and look only at the

middle row.

), resize it to 5×7, and look only at the

middle row.This document is based on my own investigations. It may contain errors. The term fairness is not standard.

Suppose we have a 7×1 pixel image, whose pixels have the following (grayscale) colors:

| 000 | 000 | 000 | 250 | 000 | 000 | 000 |

and we want to resize it to 5×1.

What do we expect when we resize it? We’re reducing it to 5/7 of its original size, so we might reasonably expect each original pixel to contribute contribute 5/7 of its brightness to the new image. Only the center pixel has nonzero brightness, and 5/7 of 250 is about 179, so we might expect the new image to look like this:

| 000 | 000 | 179 | 000 | 000 |

Technical note: This discussion ignores gamma correction. Assume that all color values use a linear brightness scale.

Another possibility is that some of the brightness could be distributed to adjacent pixels, something like this:

| 000 | 015 | 149 | 015 | 000 |

But even so, it should add up to about 179.

Let’s try it and see what happens.

I don’t want to worry about interference from the top and bottom

edges of the image, so I’ll actually take a 7×7 image

( ), resize it to 5×7, and look only at the

middle row.

), resize it to 5×7, and look only at the

middle row.

I’ll use a triangle filter, to keep it simple. Using ImageMagick’s convert utility:

convert 7.png -filter triangle -resize '5x7!' 5.png

Here’s what I get:

| 000 | 000 | 159 | 000 | 000 |

That doesn’t seem right. The image has only 159 “brightness units”, when we expected 179. Resizing the image has made it darker, on average. Why?

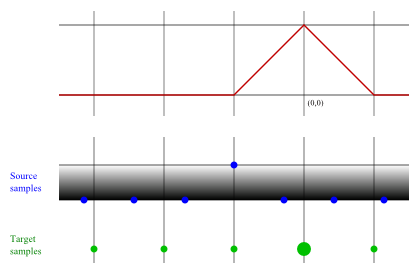

Here’s a depiction of a triangle filter being used to calculate the value of the central pixel:

There are three source pixels that contribute to the value of the central target pixel. Here’s how the calculations are done:

| Source pixel | Filter value | Pixel value | Product |

|---|---|---|---|

| left of center | 2/7 | 0 | 0 |

| center | 1 | 250 | 250 |

| right of center | 2/7 | 0 | 0 |

| Total: | 11/7 | 250 |

The unnormalized target pixel value is 250. To normalize it, divide by 11/7, giving 159.09.

All the other target pixels should be completely black. When we center the filter on any other target sample, all the source samples within its domain are 0. For example:

So, by our manual calculations, the target image should have a central pixel whose value is 159, and all other pixels should have a value of 0. And that’s exactly what we got. But it still doesn’t seem right.

Actually, the problem is our expectation. The resizing algorithm used here does not guarantee that each source sample will have an equal effect on the target image. Some of them will have less effect, and some more, not for any particular reason, but due to the details of how the source samples happen to line up with the target samples. The algorithm is unfair in this sense.

In retrospect, maybe this should have been obvious. If you know how a box filter works, it’s obvious that it is unfair. And there’s no reason to think that merely changing the shape of the filter would somehow make it perfectly fair.

The technical explanation for the difference in this example is that the filter value total was anomalously high when evaluating the central pixel. On average, we expect it to be 7/5 (1.40), but for this pixel it was 11/7 (1.57). The pixel in the center drew the short straw, and didn’t get its fair share of influence on the target image.

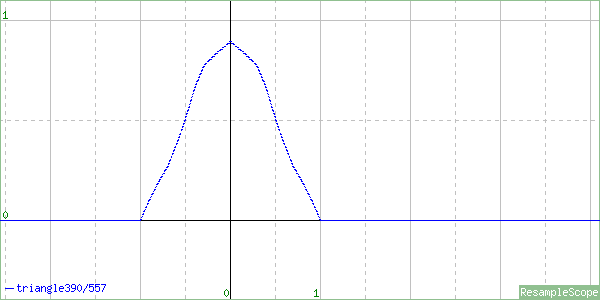

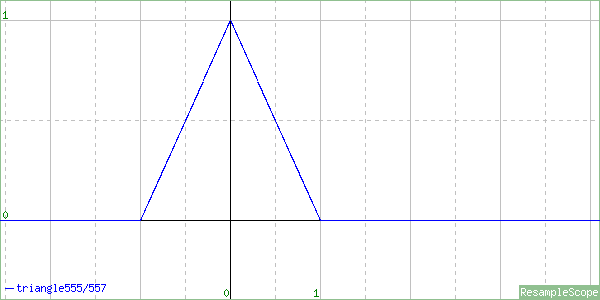

I think this is essentially why ResampleScope can produce warped images of a filter, such as this:

when the filter that was used actually looks like this:

What should you do about this problem? Here are some ideas.

In most cases, the best answer is “nothing”. It’s not a big problem, and most cures are worse than the disease.

A filter with a large “support” region, such as Lanczos-3, won’t be as affected by this problem as a narrow filter like a triangle filter. More source samples will be involved in the calculation of each target sample, making the values more likely to average out to something more fair.

I know of one common algorithm that is completely fair: pixel mixing. All filters with a fixed shape are unfair, but pixel mixing does not have a fixed shape; it varies depending on the scaling factor. That makes it possible for it to be fair.

Pixel mixing is a usable algorithm for reducing image size, but it may not do a good job with images that have thin lines, or small repetetive patterns.

Blurring the filter changes its width, which will affect its fairness. But I don’t know how to calculate the best amount to blur. And, obviously, it has other effects that are usually undesirable.

You might think you could fix this by taking a different approach: instead having the target samples “take brightness from” the source samples, have the source samples “send brightness to” the target samples.

Although such an algorithm could be fair, it has a worse problem than unfairness: solid-color regions will not remain a solid color. And any attempt to fix this problem will probably take you right back where you started, to an unfair filter.

Another crazy idea for fixing the algorithm: instead of basing the target sample on a small number of source samples, base it on an infinite number of source samples; i.e., fit a curve to the source samples, and take its integral.

This topic is too involved to discuss in much detail here. While it is probably more fair, it tends to blur the image.

Note that pixel mixing is the equivalent of taking the integral of a nearest-neighbor function. In this atypical case, the image does not get blurred.